A “strikingly realistic” short AI video

/The Wall Street Journal explains how it put together a “strikingly realistic” short AI video using Google’s Veo 3 and Runway can now create video.

The Wall Street Journal explains how it put together a “strikingly realistic” short AI video using Google’s Veo 3 and Runway can now create video.

A few years ago, I saw a cartoon of a man on his deathbed saying, “I wish I’d bought more crap.” It has always amazed me that many wealthy people keep working to increase their wealth, amassing far more money than they could possibly spend or even usefully bequeath. One day I asked a wealthy friend why this is so. Many people who have gotten rich know how to measure their self-worth only in pecuniary terms, he explained, so they stay on the hamster wheel, year after year. They believe that at some point, they will finally accumulate enough to feel truly successful, happy, and therefore ready to die. This is a mistake, and not a benign one.

Arthur C. Brooks writing in The Atlantic

It is not strength but desire that moves us.

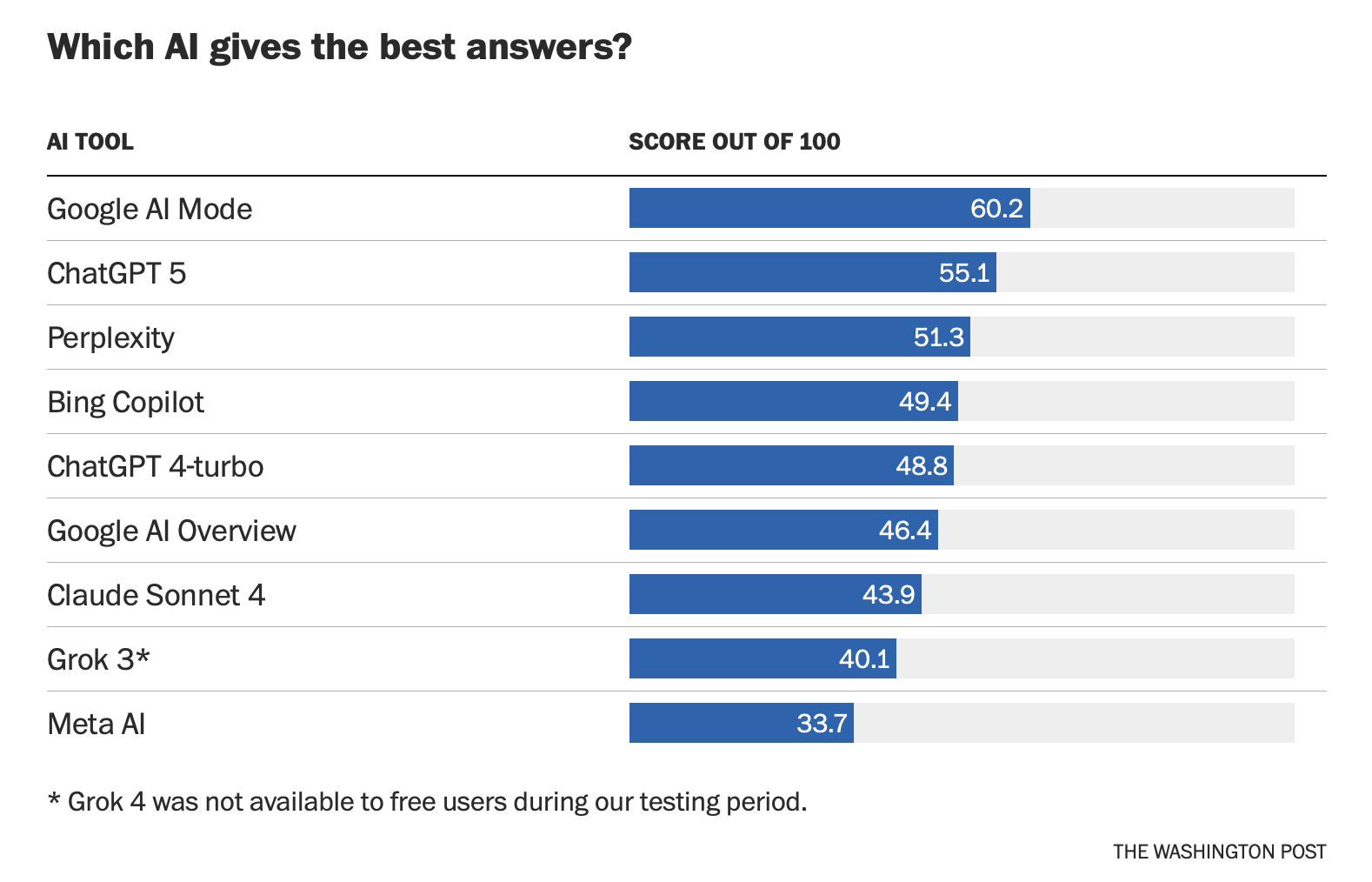

"A truth about today’s AI tools: They’re not really information experts. They have challenges determining which source is the most authoritative and most recent. It’s fair to ask whether relying on any of these AI tools as your new Google is a good idea. In many ways, AI is best suited for complex questions that take some hunting. In the best cases, AI tools could find needles in a haystack — answers that weren’t obvious in a traditional Google search." - Washington Post

American Society of Business Publication Editors

American Society of Journalists and Authors

The Arab and Middle Eastern Journalists Association

Arab Reporters for Investigative Journalism

Asian American Journalists Association

European Federation of Journalists

Global Investigative Journalism Network

Indigenous Journalists Association

The Institute for Independent Journalists

Investigative Reporters and Editors

The Journalists and Writers Foundation

Military Veterans in Journalism

National Association of Black Journalists

National Association of Hispanic Journalists

NLGJA: The Association of LGBTQ Journalists

Public Media Journalism Association

Radio Television Digital News Association

Society for Features Journalism

Keep turning the pages that need to be turned. -Angel Chernoff

A recent graduate triple-majored in computer science, math, and computational science and has completed the coursework for a computer-science Ph.D. He would prefer to work instead of finishing his degree, but he has found it almost impossible to secure a job. “We’re in an AI revolution, and I am a specialist in the kind of AI that we’re doing the revolution with, and I can’t find anything.” -The Atlantic

What the panic about kids using AI to cheat gets wrong - Vox

How AI Is Changing—Not ‘Killing’—College – Inside Higher Ed

AI Makes Research Easy. Maybe Too Easy. – Wall Street Journal

The Computer-Science Bubble Is Bursting – The Atlantic

Students Are Using ChatGPT to Write Their Personal Essays Now – Chronicle of Higher Ed

These workers don’t fear artificial intelligence. They’re getting degrees in it. – Washington Post

Almost all the class of 2026 are using AI to do their work – The Atlantic

Duke Just Introduced An Essay Question About AI—Here’s How To Tackle It - Forbes

ChatGPT’s Study Mode Is Here. It Won’t Fix Education’s AI Problems – Wired

AI is helping students be more independent, but the isolation could be career poison – The Markup

I'm a college writing professor. How I think students should use AI this fall - Mashable

ChatGPT's new study mode won't give you the answers - Axios

University students feel ‘anxious, confused and distrustful’ about AI in the classroom and among their peers – The Conversation

I Teach Creative Writing. This Is What A.I. Is Doing to Students. – New York Times

How Are Students Really Using AI? Here’s what the data tell us. - Chronicle of Higher Ed

So long, study guides? The AI industry is going after students – NPR

At one elite college, over 80% of students now use AI – but it’s not all about outsourcing their work - The Conversation

Students have been called to the office — and even arrested — for AI surveillance false alarms – Associated Press

AI in education's potential privacy nightmare - Axios

AI to the Rescue It’s an all-purpose study tool — it’s changing students’ relationships with professors & peers - Chronicle of Higher Ed

We "sell out" whenever we fail to take ownership of who we are. It's much easier to default to the expectations of friends/work/society/church rather than taking responsibility for our thinking and actions. Turning control over of what we have been entrusted with to someone (or something) else is an attempt to take the responsibility off our shoulders, so there’s someone else to blame.

World modeling – These are AI systems that can learn how the world works much as humans do as opposed to neural networks (known as large language models), which have little grasp of logic and lack common human reasoning. LLMs work on correlations within language rather than its connection to the world.

More AI definitions here

“We have started seeing employers saying things like, 'If you have that computer science degree, do you have a philosophy minor so you can help me think about the ethical implications of what I'm building?'” – Aneesh Raman, chief economic opportunity officer at LinkedIn

A group of librarians tested AI search tools for accuracy. Here’s the results:

Read more at The Washington Post

AI Advice for Students

1- Think Beyond Academic Integrity

Not just “Is this cheating or not cheating?”

But also, “Am I taking the opportunity to learn, practice, and cultivate my skills?”

To some students, college now feels like, “How well I can use ChatGPT.” Others describe writing essays as a coordination problem: get the prompt, feed it to the bot, skim the output, add some filler, hit submit. No thinking required, just interface management.

2-Define Your own Educational goals

Ask yourself: “Besides grades, what are my goals as a student?”

Prioritize learning and skill development

Seize opportunities to get the practice you need to become a better thinker, writer, and communicator.

3-Prompt to Challenge your Thinking

Instead of outsourcing your thinking (“Suggest a thesis statement I can use for my essay.”). Look for ways to think critically about the subject (“Ask me tough questions to help me figure out my thesis statement.”).

Don’t just ask, “Am I outsourcing the writing to AI?” Ask, “Am I outsourcing the thinking to AI?” We must use AI to expand our mind’s capacity to engage, rather than using it to outsource our thinking.

4- Focus on AI Literacy & Integration

Unless you want to build AI systems and become a data scientist, focus on taking outdated processes and updating them to make use of the available AI tools. Understanding the benefits and limitations of AI in light of ethics should be the goal, along with figuring out how to mesh it into your workday.

5- Double Down on your Humanity

• We can’t let it strip us of our humanity.

• Optimistically, AI may be “a piece of technology that, instead of replacing humanity, amplifies it.”

• We must retain oversight & not lose ourselves by depending on the machine.

• Doubling down on what makes you human may be what saves you from being replaced or minimized by AI.

6- Get Well-rounded

Be well- rounded in liberal arts: think of your gen ed classes as now core classes. Focus specifically on growing these skills: analytic thinking, creativity, information literature, resilience, agility, leadership, self-motivation, empathy, curiosity. Their value will rise as AI takes over routine tasks.

7- Distinguish between AI-generated content, AI-assisted content, & AI-supplemented content

Group A ❌ Group B ✅ Group C 🤔

AI-generated content AI-assisted content/writing AI-supplement content

Facilitated writing/learning

AI-generated content ❌ is entirely produced by the AI or sections are produced by the AI, based on detailed instructions (prompts) provided by the author. Some AI is best thought of as a set of automation tools that function as closed systems that do their work without oversight—like ATMs and dishwashers.

In academia, it is not acceptable under normal circumstances unless there are significant and clear reason why this was necessary. However, in business, it is likely to be treated as acceptable when the content is merely informational and not intended to be creative. The focus in this situation is accuracy and speed with minimal effort as opposed to authenticity. For instance, a summary of a business meeting or an email answering a particular question about the business, where it is assumed, the writer may incorporate AI-generated content.

Group B ✅ is work that is predominantly written by an individual but has been improved with the aid of AI tools. AI is part of the process. The author remains in control, and the AI merely acts as a polishing tool. As opposed to automation tools, these collaboration tools—like chain saws and word processors. In any given application, AI is going to automate or it’s going to collaborate, depending on how we design it and how someone chooses to use it.

This kind of assistance is generally accepted by most publishers as well as the Committee on Publication Ethics, without the need for formal disclosure. This includes: creating outlines, improving clarity, grammar, summarizing, brainstorming, generating transcription, condensing notes, creating study guides, practice questions, editing, and suggesting alternative approaches to a problem.

Group C 🤔 includes changing phrasing, generating a citation list, revising sentence structure, reducing word count, etc. Writers and publishers disagree about whether using AI in this way is ethical or not.

Keep away from people who try to belittle your ambitions. small people always do that, but the really great make you feel that you, too, can become great. -Mark Twain

The question is not whether AI can do things that experts cannot do on their own—it can. Expert humans often bring something that today’s AI models cannot: situational context, tacit knowledge, ethical intuition, emotional intelligence, and the ability to weigh consequences that fall outside the data. The value is not in substituting one expert for another, or in outsourcing fully to the machine, or indeed in presuming the human expertise will always be superior, but in leveraging human and rapidly-evolving machine capabilities to achieve best results. -David Autor and James Manyika writing in The Atlantic

Major Broadcast Networks

Aggregate Sites

eNewsletters

Aggregation Apps

Flipboard (you’ll need to mute weak & non-news sites)

Explainer Journalism

Wonkblog (WaPo)

the Conversation (articles written by university and research experts)

National News

NPR (morning edition)

Washington Post (list of daily print articles)

New York Times (list of daily print articles)

Tech

BBC (future section)

Politics

Washington Post (politics section)

Investigative Journalism

Education

Business

Economist (sub. req.)

Science

New York Time (science section)

Religion

Washington Post (Acts of Faith section)

Health

New York Times (health section)

News Media

Opinion

When we spend our lives waiting until we're perfect or bulletproof before we walk into the arena, we ultimately sacrifice relationships and opportunities that may not be recoverable, we squander our precious time, and we turn our backs on our gifts, those unique contributions that only we can make.

We must walk into the arena, whatever it may be—a new relationship, an important meeting, our creative process, or a difficult family conversation—with courage and a willingness to engage. Rather than sitting on the sidelines and hurling judgment and advice, we must dare to show up and let ourselves be seen. This is vulnerability. This is daring greatly.

Brené Brown, Daring Greatly

Invention, it must be humbly admitted, does not consist in creating out of void, but out of chaos. -Mary Shelley (born Aug. 30, 1797)

The trick is, when there is nothing to do, do nothing. -Warren Buffett (born Aug. 30, 1930)

Generative AI is making it exponentially easier for China to create believable, engaging content. GoLaxy — which operates in close alignment with the Chinese government's interests — appears to be tapping generative AI to mine social media profiles and create content that "feels authentic, adapts in real-time and avoids detection. "Documents show that GoLaxy has created profiles for at least 117 members of Congress and over 2,000 American political figures and thought leaders. -Axios

Becoming is a service of Goforth Solutions, LLC / Copyright ©2026 All Rights Reserved