A Summary of 3 Major AI Legal Issues

/There are dozens of lawsuits pending over the use of AI. Here are three of the major issues facing the courts.

1a. Copyright & AI: Creating with AI

Defined: Copyright law is about protecting original expression that’s been “fixed in any tangible medium.”

Current Law: AI-generated content can't be copyrighted because an AI cannot hold a copyright and these images are not considered to be the work of a human creator. When AI is combined with human effort, the US Copyright Office determines whether a work is protected based on the amount of AI used.

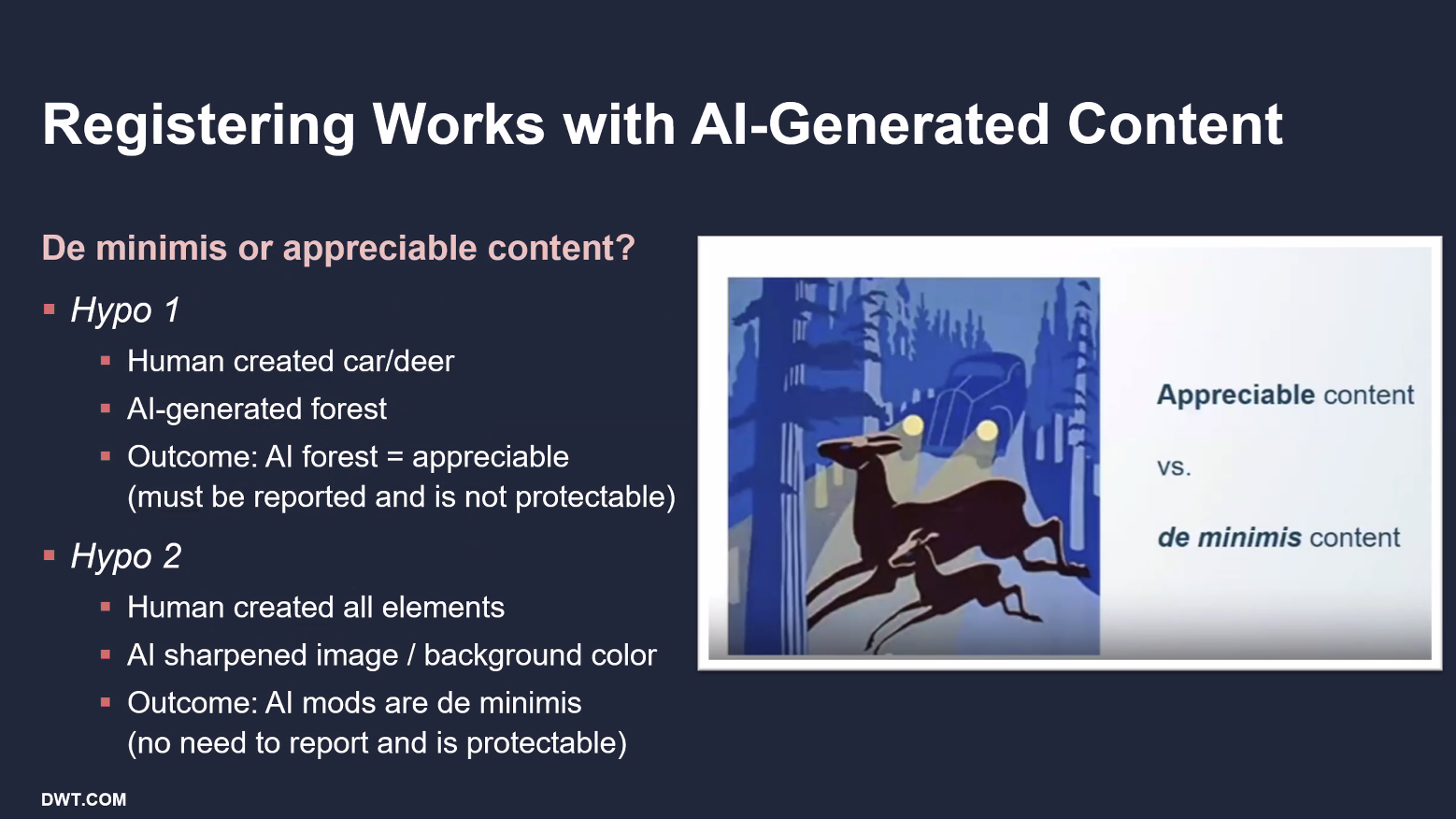

This image from a US Copyright Office presentation illustrates:

Do you think this is a copyright violation?

In Nov 2025, Getty Images largely lost its London lawsuit against artificial intelligence company Stability AI over its image generator. While it failed on copyright grounds, Getty succeeded "in part" on trademark infringement in relation to Getty watermarks.

1b. Copyright & AI: AI Training

Defined: Training Data is the data initially provided to an AI model so it can create a map of relationships, which it then uses to make predictions. Giving the AI a wide data means more options and may lead to more creative results. Issues arise when AI companies potentially use copyrighted content for training without first receiving permission from the copyright holders.

Current Law: There is no law that directly applies to using copyrighted material for training an AI. The Copyright Office has left the door open to considering it as a possibility, apparently waiting until the courts rule on the issue.

Unresolved Legal issues: There are more than 30 active lawsuits between AI companies and creators over whether permission is needed for copyrighted material to be used for training data without permission. Some AI companies argue that their use falls under the legal concept of “fair use.” It holds that there are exceptions to the copyright rules when the material is used for things like education and news.

Example: So far, Anthropic and Meta have successfully argued in lower courts that their use of the copyrighted books was "exceedingly transformative." However, authors whose works were alleged pirated can receive compensation as part of a $1.5 billion settlement.

2. Right of publicity & AI

Defined: The Right of Publicity is the right of individuals to control the commercial use of their name, image, likeness, or voice (NIL).

Current Law: There is no federal NIL law, only state laws that are not entirely consistent. Tenn. has what’s called The Elvis Act, which protects individuals from AI voice cloning and unauthorized digital replicas. New York has a similar law. One area of the law that remains murky is what constitutes a commercial use of AI. A bill was introduced in Congress in 2024 that would forbid AI-generated replicas of people without their permission. However, the legislation hasn’t been voted on in the House or Senate.

Unresolved Legal issues: Are unauthorized clones in ads, music, and social media a violation of NIL laws? Or are they an expression of First Amendment rights?

Example 1: Grammarly’s “Expert Review”

This feature promised feedback on your writing from the perspective of a bunch of famous authors, journalists and academics. Now deactivated. Class-action lawsuit. The tool wasn’t that good, so it is making those writers look bad, they claim.

Go Deeper: Should you be allowed, legally, to use AI to “write like yourself”?

Should you be allowed to use AI to write like someone else? Would it make it more acceptable to train an AI on someone’s voice or image if the creative work used was first legally purchased?

Example 2: Matthew McConaughey

The actor has secured eight trademarks from the U.S. Patent and Trademark Office to protect his likeness and voice from unauthorized AI use. McConaughey’s lawyers believe that the threat of a lawsuit in federal courts would help deter misuse, though an actual court fight would have an uncertain outcome.

Go Deeper: There is a difference in how the law considers public vs. private citizens when it comes to issues of defamation. Should there also be a difference in how we treat the AI replication of a public figure?

How does generative AI challenge our traditional understanding of personal identity?

3. Liability

Defined: Businesses are responsible for damage caused by AI.

Current Law: Areas of risk include intellectual property infringement, data breaches, bias, and defamation. Improper AI use by employees, particularly deepfakes, could expose businesses to harassment and discrimination claims.

Unresolved Legal issues: Who is responsible when AI is used for harm? Are businesses responsible when employees use AI tools to create doctored images and audio targeting coworkers?

Examples:

In Maryland, a school employee was sentenced to jail after using AI to create a racist deepfake recording of a principal.

A Nashville television meteorologist sued her former station after management failed to adequately address deepfake sexual images created using her likeness.

Employers using AI to screen candidates’ social media could be held responsible for bias and false inferences. Cultural or linguistic styles, code-switching, slang, sarcasm, and memes, could lead to misclassification by bots. AI tools don’t understand context or sarcasm and are at risk of misreading humor, quotes, or historical posts. Reviews of social media feeds can reveal religion, disability, pregnancy, age, and a host of other factors that should not be considered at the time of hire.

Go Deeper: AI in employee handbooks

Other concerns: freelancing contracts